IAC

How deploying infrastructure as code enables quality management practices

Rein Remmel

12. july, 2022

Infrastructure as code is an operations practice that lets system administrators and DevOps experts make their configurations reusable.

All kinds of management - whether it is infrastructure configuration management or any other type of administration - can be done in two different ways:

- It can be process-oriented, prescribing and micromanaging all the details in advance.

- Or it can be result-oriented, describing the intent and context that set a goal, and letting the team figure out the best path to achieving the results.

All management experts will tell you that result-oriented tactics are easier to administer and more economical about spending resources. They are also more likely to get you to the actual results.

The same applies when collaborating with technology. You can give detailed orders to a computer if you understand all the different contexts for these orders to be executed. But usually computers are much better than humans when it comes to computing and comparing multiple possible solutions in different contexts. This is why it often makes much more sense to give the computer a final goal and let them make the best decisions on the way.

When we speak about deploying infrastructure in the DevOps context, there is a time and place for both approaches.

Why Infrastructure as Code Is Important in DevOps

Broadly, there are two different ways for deploying infrastructure:

- Doing manual setup for all components (system administrator labour)

- Programming code that automatically sets up infrastructure (infrastructure as code)

The manual approach can be faster when learning to know a new system, or when your main goal is to discover and try out things. Sometimes you don’t yet know how to describe your final destination. Then creating the setup by hand and adjusting the details on the go is the right way to proceed.

All graphical and command line interfaces used for manual setup are always process-oriented. The result-oriented approach is not possible here, as you’ll need to describe each task and process to the computer as you proceed.

The alternative is programming a computer to set up the infrastructure for you. The infrastructure as code approach is usually best for recurring tasks that you need to do more than once, and for everything that requires regular maintenance in the future. Having a piece of code quickly execute a perfect setup is much faster than manually entering all the data every single time. It also avoids possible errors that are easy to happen with manual data input.

Deploying infrastructure as code also gives us the possibility to prescribe the setup as result-oriented. Also known as “infrastructure as data”, it is the declarative way of describing the intended state of infrastructure. We tell the computer exactly how the results need to look like, and let it make appropriate decisions about the right path when reaching that state.

How Does Infrastructure as Code Support the Best Quality Management Practices?

Infrastructure as code (IaC) builds a strong foundation for DevOps quality management and continuous improvement.

Usually about 70% of all resources are spent on operations and maintenance, and only 30% on innovation and development. Moving the needle towards more innovation is an obvious way to strive towards better business results, but this requires a sharp focus on continuous improvement. Make sure that your operations are extremely effective, and you will be able to spare more resources for innovation.

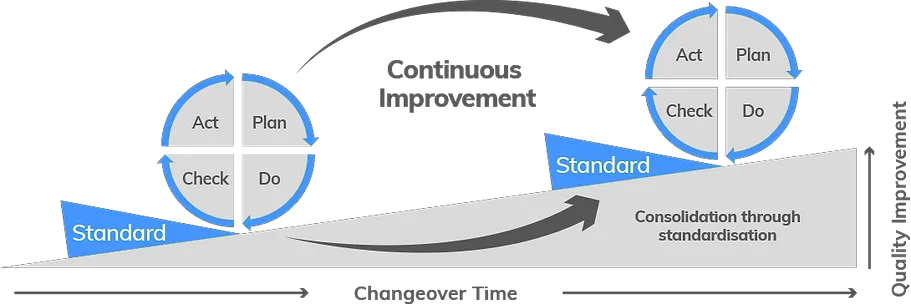

Modern quality management practices are based on the Deming cycle.

- Imagine what could be improved and how

- Implement a change

- Check your metrics - did you improve things?

- If yes, set the changed state as a new baseline standard. If not, roll back to the previous state.

When your DevOps deploys IaC, it is much easier to establish and strengthen these baseline standards that get set during the Deming cycle. When each cycle is based on the results of the previous one, it is much easier to compare the changes and determine progress.

Image credits: Clarity.

Image credits: Clarity.

What Are the Benefits of Infrastructure as Code?

- It is repetitive, and provides consistent results

Manually editing our infrastructure keeps the change workload always on the same level, or creates additional work if mistakes and variations have been introduced to the results.

Everything we already know and have learned should be delegated to a computer for optimisation and automation. This will produce consistent results that can become the standard before planning the next improvement cycle.

- It is really fast

A stable and consistent environment lets you quickly move along with further iterations. The ability to fulfil in-house infrastructure requests almost instantly is a great upgrade for shortening the in-house lead time.

IaC is an early investment that may take a few more hours setting up, but will save you a lot of time and labour costs in the long run when scaling.

- It is versionable

Whenever errors occur after making changes, you can exactly see what got changed, so it’s easier to troubleshoot and fix. When rollbacks are simple, the team will have less anxiety over making new changes. This really helps with keeping them motivated to move fast and still be able to preserve the service quality.

- It is secure

Repetitive and consistent deployments without the chance for human errors are less likely to cause security vulnerabilities. When all changes are auditable, you are able to verify in detail which version was live at any given moment, and when it was published.

- It is sustainable

IaC is self-documenting. Developers do not need to spend time on writing notes or producing additional explanatory documents. This radically lowers the need for re-learning and figuring out what was set up.

It also helps you optimise costs. When you always have a full overview of the infrastructure in use, it is easy to ensure you’re using exactly the resources what you need at the best price point.

What Problems Does Infrastructure as Code Solve?

- Use declarative configurations

To use the result-oriented approach you need to choose infrastructure components that allow declarative configurations. Not every hardware setup will have a suitable API that will actually let you describe the goals instead of the process.

For example, out of the box hardware never comes with their own API at all. This is a challenge when building your own infrastructure - you will need to figure out how to implement an API for your infrastructure configuration management.

- Prefer cloud services over building your own infrastructure

Public cloud services like Amazon AWS, Google Cloud and Microsoft Azure are specifically designed for management over API. Because of this, their native built-in API capacity is always better than a system where the API gets bolted on after unboxing the hardware on location.

This is why all public cloud services support declarative configurations by default. They act as a central vendor and all their services support a single universal API. This will give you an uniform experience when managing the services. You also don’t need to buy additional licences like you would for physical devices in your data centre.

It can be surprisingly challenging to provide comparable options when setting up your own infrastructure. Without enough in-house skills and resources, going down the lane of self-building can eventually make you give up and take the path of process-oriented micromanagement. While at a glance it may feel less expensive to own your infrastructure, the building process will absorb all of your most talented engineers, and provide mediocre results in terms of security and reliability when compared to the public cloud. 3. Use orchestrating tools to enable IaC

While all public cloud services are designed for management over API to enable IaC, most APIs can not support declarative configurations by themselves at all.

Here’s where orchestrating tools come to play. They are meant to implement the declarative configuration by understanding the state of your current infrastructure, and taking it to the intended state through commands sent through API. This lets you radically simplify your code-base.

Without an orchestrating tool, you would need to develop this code from scratch, along with all of the error handling. Writing out the full logic for interpreting instructions is a lot of code, so it only makes sense to use a proven and validated environment where the bugs for most common use cases are most likely to be fixed already.

We always recommend consuming the orchestrating tools as a managed service from a cloud provider, instead of setting up from scratch. This lets you focus on the value-adding applications and use IaC practices that really improve the service quality for the end customers.

Is Kubernetes Necessary for Infrastructure as Code?

Currently the de facto standard for orchestrating tools is Kubernetes - an open-source system for automating deployment, scaling and management of containerised workloads and services.

While it is not the only way to embrace the practices of infrastructure as code, it is surely the most common way to get all of your technologies under control within a single API.

The scope of technologies that could be using IaC is large and wide. From resource-computing networks to software-managing application instances, each of these may have their own management methods. To keep them all under your control, you either need to choose them in a way that fits under a common API, or you can find a middle-man software that provides a common API for all of these technologies.

This is the main value of Kubernetes - it can provide a common resource model and API for a wide range of back-end and infrastructure components. Whatever your stack is, it is highly likely that Kubernetes already covers all of your existing technologies.

How Should You Implement Kubernetes?

Keep in mind that Kubernetes is just a tool. Implementing Kubernetes should never become a goal itself. Always make sure that by adding another tool in your stack you are actually creating value, not just adding complexity. Each new tool needs time and effort for implementation, and lots of resources for adjustment.

If you work on active software development, IaC and Kubernetes will surely speed up your work processes and lower the risk for mistakes. But they are a heavy investment in skills and knowledge. Before you start, be convinced that upgrading your practices compensates the original investment of training and going through the mindset change.

If you think IaC could be useful for your organisation, do not hesitate to get in touch with Entigo to see how we can help! The infrastructure as code approach is a core for all Entigo services. We help organisations with cloud migration and modernisation, but can also provide more targeted services for companies of different sizes and growth stages.

READ MORE

IAC

How deploying infrastructure as code enables quality management practices

Read more about insights

CUSTOMER-CENTRIC

How to become a product leader with a customer-centric mindset

Read more about insights

CLOUD-MIGRATION

How to move your business to the cloud - a strategy and detailed steps

Read more about insights

FINOPS

Best practices for cloud cost management with FinOps

Read more about insights

DEVOPS

How to reduce lead time in software development with DevOps and value stream management

Read more about insights

AWS

Why do an AWS well-architected review?

Read more about insightsGet notified about upcoming events